Introducing Text Input Moderation in VRChat

VRChat has always been a place where you can be whoever you want to be. Creative expression and genuine connection coexist here. That's not something we take lightly, and it's not something we're trying to change.

As VRChat grows, so does our responsibility to ensure it remains a place where everyone can show up and feel welcome, whether they're veterans or newcomers, younger or older, or from anywhere in the world. That means evolving how we approach safety.

In line with our commitment last year to invest in Trust & Safety (previous blog here), today we're sharing one of those steps.

What We're Launching

We're introducing automated text detection on most user-submitted text fields. If the text you enter violates our Community Guidelines, you may see an error when you try to submit it.

Text not final and subject to change.

This covers display names, profile bios, status messages, other profile text, world names, instance names, avatar names, and more.

This system does not cover instance text chat (chat boxes).

Coverage will expand to additional fields over time. This is a new layer of proactive prevention that improves the safety of our platform.

How We Use AI

The detection runs on a machine learning model that we host on our own hardware. Your text isn't sent to a third party for classification, and isn’t used to train any external vendor's model. The model we’re using was tuned by our team using tens of thousands of internally sourced, human-labeled examples. When you flag a false positive through the feedback button (more on that below), our team reviews it and uses it to improve the model over time. Corrections aren't automated – they are reviewed by a team of humans.

We’ve heard the community’s feedback about enforcement and false positive rates, so we prioritized keeping legitimate text flowing. Our internal target is a false positive rate below 1% – a metric that outperforms human review. Currently, our model and process performs on par or better when compared to industry standards.

Even though our false-positive target has made it challenging to maintain a high detection rate, our goal is to keep the vast majority of our players who aren’t malicious using VRChat unaffected by false positives.

Separately: AI does not make moderation decisions at VRChat. Every account action -- every warning, every suspension, every ban -- is reviewed by a human. That hasn't changed, and this launch doesn't change it. What AI does here is help our reviewers: blocking content at input so no one else has to encounter it, surfacing patterns in user reports, and helping us catch our own false positives faster. These tools inform and assist human reviewers in making decisions. They do not replace them.

When It Gets It Wrong

Automated text detection is imperfect. Language is complicated! Context matters, irony exists, communities reclaim words, and a word that means one thing in one region means something completely different in another. So, some text is going to get flagged that shouldn't be.

If that happens to you, two things:

First: your account is fine. Getting your text rejected is not a moderation action. Nothing is logged against you. No strikes, no bans, no permanent record mark results from a false positive. In other words: if you see someone saying they got punished for their profile text, it’s because a human reviewed a report that a human sent in about their bio, and a human decided that the profile broke the rules.

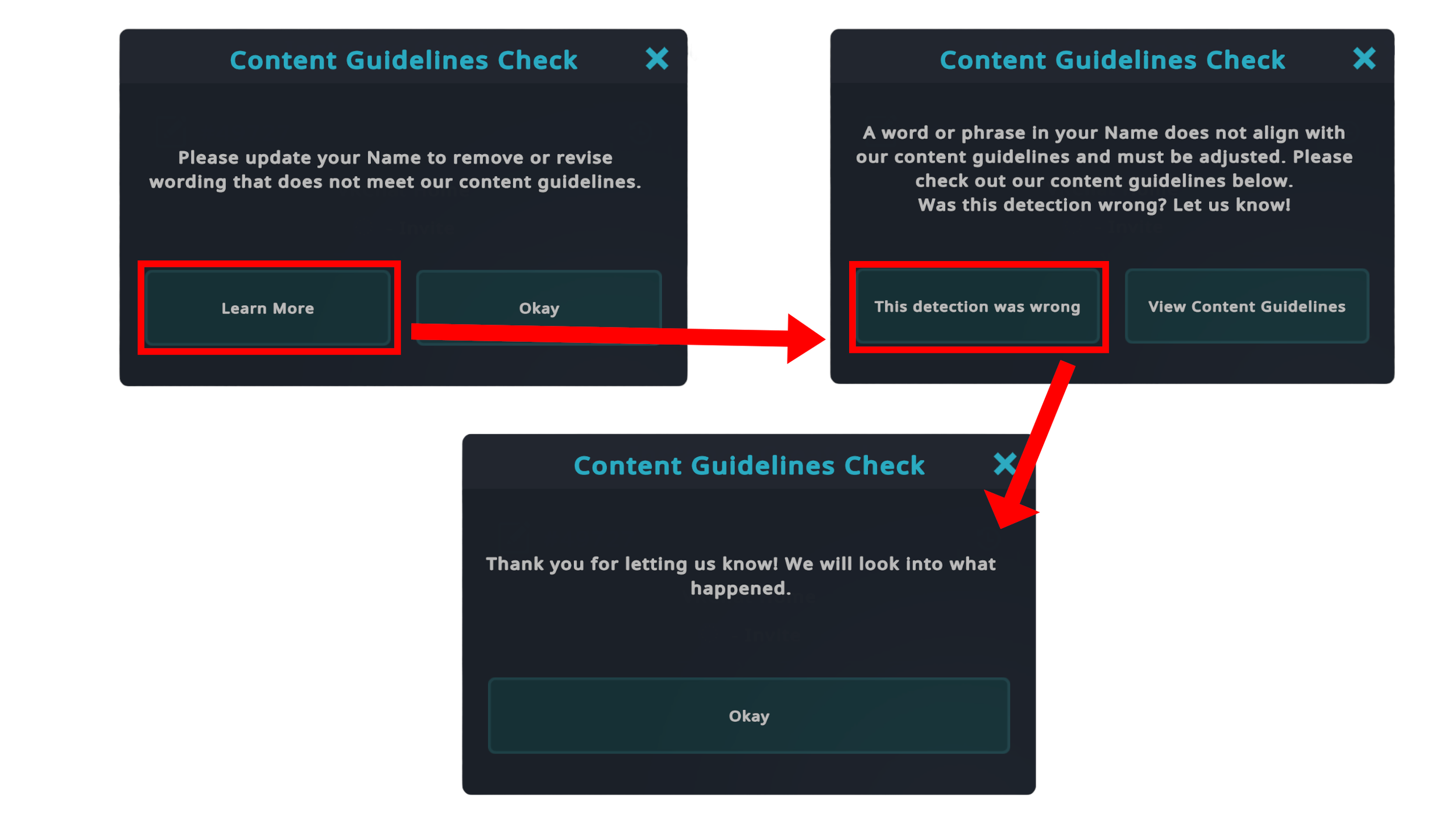

Second: tell us! The error prompt includes a button to flag the block as a mistake. Press it. Pressing that button routes the detection to our team. Those reported false positives are what we use to tune the model. If you want to help make this system better, this is the single most useful thing you can do.

Text not final and subject to change.

So, Why Are We Doing This?

Without any proactive detection, the burden of catching harmful content falls almost entirely on the people who encounter it. That means someone new, or young, or just trying to enjoy VRChat has to see harmful content in someone's profile or display name before any action can be taken. That's not a standard we're willing to keep.

User reports are essential, and we're not going anywhere on that. They're reactive by nature, though. This is our attempt at a proactive layer to sit alongside them.

What’s Next

We'll monitor for over-moderation, especially around regional language and context-specific usage, and adjust as we learn. Remember: when you flag a false positive, it goes to a real person and it gets used. We'll be honest about what's working and what isn't.

Text moderation is part of the broader Trust & Safety investment we committed to last year, and we're continuing to build it out. If anything about this system changes meaningfully -- what it covers, how it's trained, what data it sees -- we'll post about it.